Intelligence Without Insight

Why Corporate Risk Products Are Failing Decision-Makers in Conflict Environments

Boards and executive teams increasingly operate under the assumption that exposure to geopolitical and security risk is being adequately managed through the procurement of external intelligence products. Heat maps, dashboards and long-form regional assessments are treated as evidence of coverage. In practice, many of these outputs provide a veneer of sophistication while failing to materially support decision-making in volatile environments.

The issue is not a lack of information, but a failure of translation, specifically, the inability to convert observable events into calibrated, decision-relevant risk positions. This weakness becomes most visible in active or near-active conflict environments, where the consequences of misjudgement are immediate and compounding (RSM Malta, 2024; StandardFusion, 2026).

What is presented as “intelligence” is, in many cases, structured narrative layered over weak or opaque methodological foundations.

The structural problem: abstraction over evidence

Contemporary corporate risk products typically rely on three core components: country-level risk maps, probability–impact matrices and narrative reporting. While each has standalone value, their combined application, without methodological transparency, creates an illusion of precision that is difficult to interrogate.

Country risk maps compress complex, multi-domain realities into simplified categorical outputs. Political instability, infrastructure degradation, regulatory volatility and physical security threats are aggregated into a single rating, often without disclosure of indicator selection or weighting logic (RIMS, 2017; AFERM, 2017). This aggregation limits the ability of users to challenge or validate the assessment.

Probability–impact matrices present a similar issue. Although widely adopted, they frequently rely on loosely defined scales and subjective judgement, particularly in relation to low-frequency, high-impact events that characterise geopolitical crises (GARP, 2022; SRA Global, 2025). The structure appears robust; the calibration is often not.

Narrative reporting, which typically accompanies these tools, is often comprehensive and well-articulated. However, it frequently lacks a transparent link between observed indicators and assigned risk ratings. The descriptive layer is strong; the analytical linkage is weak (Riskonnect, 2022; Deloitte, n.d.).

This disconnect is not incidental, it is structural.

When narrative and reality diverge

A recent regional assessment of a large-scale Gulf–Iran escalation illustrated this divergence. The report documented widespread disruption across multiple domains, including coordinated strikes across Iranian territory, effective closure of the Strait of Hormuz due to insurance withdrawal, force majeure declarations across LNG supply chains, airspace restrictions and direct targeting of critical infrastructure.[1]

The narrative described halted exports, stranded vessels, production constraints and evacuation guidance for non-essential personnel. In isolation, this reflected a high-intensity operating environment with clear implications for business continuity.

However, the associated risk ratings remained comparatively restrained. Iraq, despite export disruption, airspace closure and militia activity, was classified with only moderate operational risk. Similarly, Gulf states experiencing direct strikes and infrastructure disruption were positioned within moderate or moderate-high bands.

The issue is not interpretation, but calibration. If observable indicators, such as infrastructure targeting, logistics disruption and operational constraints, do not trigger corresponding escalation in risk ratings, then the underlying model is either constrained or misaligned (Turnkey Consulting, 2019; RSM Malta, 2024).

In such cases, the output ceases to function as a decision-support tool and instead becomes a presentation artefact.

The influence of AI-generated analysis

The integration of generative AI into intelligence production has introduced additional complexity. While such tools improve efficiency and synthesis, they also reinforce existing weaknesses when not applied with discipline.

AI-assisted outputs tend towards fluency and structural coherence but may lack analytical depth. Common characteristics include repetition, generalisation and reliance on historical analogy without clear operational relevance (MIT Sloan, 2024). Assertions are often presented without defined thresholds or supporting datasets, reducing their value in decision-making contexts.

The result is an expansion of narrative volume without a corresponding increase in actionable insight. For decision-makers, this creates a risk of overconfidence in outputs that appear comprehensive but lack evidential grounding (AuditBoard, 2025; LineSlip Solutions, 2026).

Static models in dynamic environments

A broader structural issue lies in the persistence of static frameworks within dynamic threat environments.

Many corporate risk systems operate on update cycles that are incompatible with the pace of geopolitical crises. Weekly or monthly refresh intervals are insufficient in environments where conditions shift within hours, leading to reliance on outdated assessments (RIMS, 2017; AFERM, 2017).

At the same time, the underlying models remain opaque. Vendors increasingly reference AI-enhanced scoring or continuous monitoring capabilities, yet provide limited transparency regarding data inputs, weighting mechanisms or calibration processes (Seerist, 2025; Deloitte, n.d.). This restricts the ability of users to evaluate the reliability of outputs.

Critically, most assessments remain at the country level, despite risk being experienced at the level of assets, corridors and operational dependencies. This mismatch reduces the practical value of the analysis for organisations operating in complex environments (Rule, 2025).

Re-grounding risk: LICREPH as a proprietary, evidence-led framework

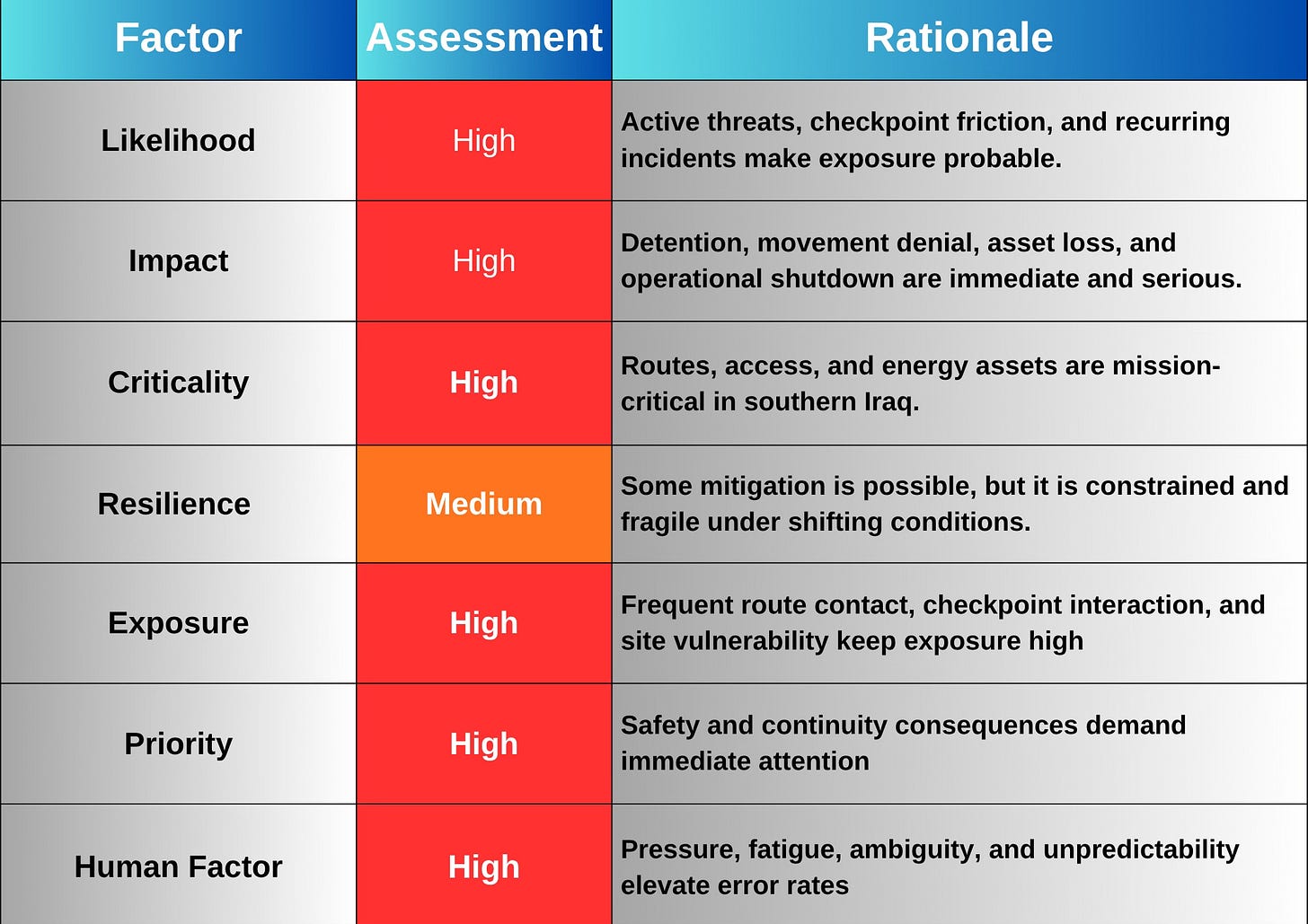

Addressing these limitations requires a shift from narrative-driven assessment to structured, evidence-based risk modelling. At Pillars Global, this has led to the development of LICREPH a proprietary risk framework designed specifically for environments where conventional models consistently fail.

LICREPH (Likelihood, Impact, Criticality, Resilience, Exposure, Priority and Human Factors) was developed from operational conditions rather than theoretical constructs. It reflects the need to translate real-world disruption into defensible, decision-relevant risk outputs.

Unlike traditional matrices, LICREPH disaggregates risk into defined components:

Likelihood derived from observable indicators and incident patterns

Impact assessed across operational, financial and human dimensions

Criticality linked to the strategic importance of affected assets

Resilience reflecting organisational recovery capacity

Exposure capturing proximity and vulnerability

Priority aligning risk with decision thresholds

Human Factors accounting for behavioural and leadership variables

This structure introduces transparency. Each component is measurable, adjustable and directly linked to observable inputs. It also enables calibration, allowing scores to be tested against historical events and refined over time (GARP, 2022; RSM Malta, 2024).

Crucially, LICREPH enforces alignment between evidence and output. Where indicators escalate, the model requires corresponding escalation in risk scores. This removes the tendency to moderate outputs for consistency or presentation.

The below table offers the current LICREPH ground truth for southern Iraq as of Friday march 20th 2026

When applied to complex environments such as southern Iraq or the wider Gulf, LICREPH produces materially different outcomes from traditional models.

Simultaneous disruption to airspace, energy exports and critical infrastructure directly elevates Likelihood, Exposure and Impact scores. Assets such as export terminals, refineries or data centres increase Criticality, amplifying the overall risk position. Where resilience is limited or degraded, this further compounds the score.

The result is not a moderated average, but a defensible risk state grounded in operational conditions.

This distinction is significant. Traditional models often smooth or average risk to maintain consistency. LICREPH, by design, does not. It reflects the environment as it is, not as it is convenient to present.

Integrating macro and micro analysis

While LICREPH forms the proprietary decision engine, it is supported by established analytical structures.

At the macro level, PESTLE provides a disciplined framework for assessing environmental pressures across political, economic, social, technological, legal and environmental domains (CIPD, 2025; Consulterce, 2021). These dimensions inform Likelihood and Exposure within LICREPH.

At the micro level, impact models such as PEARS support asset-specific consequence analysis across people, environment, assets, regulatory exposure and systems (SIL Safe, 2026; SynergenOG, 2023). These feed directly into Impact and Criticality scoring.

Within this structure, PESTLE and PEARS provide context and detail. LICREPH integrates them into a coherent, calibrated risk output.

Implications for boards and risk leaders

For boards and risk leaders, the implication is not the rejection of external intelligence, but a more disciplined engagement with it.

Risk outputs should be interrogated for methodological transparency, data provenance and calibration. Where these elements are absent, outputs should be treated as contextual inputs rather than decision anchors (AuditBoard, 2025; StandardFusion, 2026).

More fundamentally, organisations must retain ownership of risk interpretation. External vendors can inform, but they cannot substitute for internal judgement grounded in operational understanding.

In high-risk environments, the cost of mis-calibrated risk is operational, not theoretical.

Conclusion

Corporate risk intelligence has evolved in presentation but not consistently in analytical rigour. Visualisation, narrative and delivery have improved, yet the underlying challenge remains unresolved: translating complex, dynamic environments into calibrated, decision-relevant outputs.

The gap between intelligence and insight persists because models remain opaque, static and insufficiently anchored in observable reality.

Proprietary frameworks such as LICREPH developed from operational necessity rather than theoretical design, offer a more transparent and accountable approach. By forcing alignment between observed events and risk outputs, they move risk assessment closer to the realities decision-makers must confront.

In environments defined by volatility and consequence, that distinction is not academic.

It is operational.

References

AFERM (2017) The strengths and weaknesses of country risk maps. Available at:

https://www.aferm.org

(Accessed: 20 March 2026).

AuditBoard (2025) New risk intelligence report: inconsistent execution is the primary barrier stalling enterprise AI deployment. Available at: https://www.auditboard.com/blog (Accessed: 20 March 2026).

CIPD (2025) PESTLE analysis. Available at: https://www.cipd.org/uk/knowledge/factsheets/pestle-analysis-factsheet(Accessed: 20 March 2026).

Consulterce (2021) PESTLE analysis: the macro-environmental framework explained. Available at: https://consulterce.com/blog/pestle-analysis (Accessed: 20 March 2026).

Deloitte (no date) The connecting force: risk intelligence platforms enabling decisions. Available at:

https://www2.deloitte.com

(Accessed: 20 March 2026).

GARP (2022) The risk matrix approach: strengths and limitations. Available at: https://www.garp.org/risk-intelligence(Accessed: 20 March 2026).

LineSlip Solutions (2026) Risk intelligence for corporate risk leaders. Available at:

https://www.lineslipsolutions.com

(Accessed: 20 March 2026).

MIT Sloan Management Review (2024) AI-related risks test the limits of organizational risk management. Available at: https://sloanreview.mit.edu/article/ai-related-risks-test-the-limits-of-organizational-risk-management (Accessed: 20 March 2026).

RIMS (2017) The strengths and weaknesses of country risk maps. Available at: https://www.rmmagazine.com/articles/article/2017/11/01/the-strengths-and-weaknesses-of-country-risk-maps(Accessed: 20 March 2026).

Riskonnect (2022) What is risk intelligence? A comprehensive guide. Available at: https://riskonnect.com/risk-management/what-is-risk-intelligence (Accessed: 20 March 2026).

RSM Malta (2024) ERM – limitations of traditional risk management. Available at: https://www.rsm.global/malta/insights/erm-limitations-traditional-risk-management (Accessed: 20 March 2026).

Rule, J. (2025) Geopolitical risk: 5 red flags that put your third-party vendors at risk. Available at: https://www.rule.co.uk (Accessed: 20 March 2026).

Seerist (2025) AI-driven geopolitical risk monitoring platforms. Available at:

https://seerist.com

(Accessed: 20 March 2026).

SIL Safe (2026) PEAR model – People, Environment, Asset, Reputation. Available at: https://www.silsafe.net/glossary/pear (Accessed: 20 March 2026).

SRA Global (2025) The fundamental flaw of the risk matrix tool. Available at: https://www.sra-global.com/blog(Accessed: 20 March 2026).

StandardFusion (2026) Enterprise risk management vs traditional risk management. Available at: https://www.standardfusion.com/blog (Accessed: 20 March 2026).

SynergenOG (2023) What is PEAR in risk assessment? Available at: https://www.synergenog.com/knowledge/what-is-pear-risk-assessment (Accessed: 20 March 2026).

Turnkey Consulting (2019) The limitations of traditional risk management. Available at: https://www.turnkey-consulting.com/blog (Accessed: 20 March 2026).